[ad_1]

Are you ready to bring more awareness to your brand? Consider becoming a sponsor for The AI Impact Tour. Learn more about the opportunities here.

Nous Research, a private applied research group known for publishing open-source work in the large language model (LLM) domain, has dropped a lightweight vision-language model called Nous Hermes 2 Vision.

Available via Hugging Face, the open-source model builds on the company’s previous OpenHermes-2.5-Mistral-7B model. It brings vision capabilities, including the ability to prompt with images and extract text information from visual content.

However, soon after launch, the model was found to be hallucinating more than expected, leading to glitches and the eventual renaming of the project to Hermes 2 Vision Alpha. The company is expected to follow this up with a more stable release, providing similar benefits but fewer glitches.

Nous Hermes 2 Vision Alpha

Named after Hermes, the Greek messenger of Gods, the Nous vision model is designed to be a system that navigates “the complex intricacies of human discourse with celestial finesse.” It taps the image data provided by a user and combines that visual information with its learnings to provide detailed answers in natural language.

VB Event

The AI Impact Tour

Connect with the enterprise AI community at VentureBeat’s AI Impact Tour coming to a city near you!

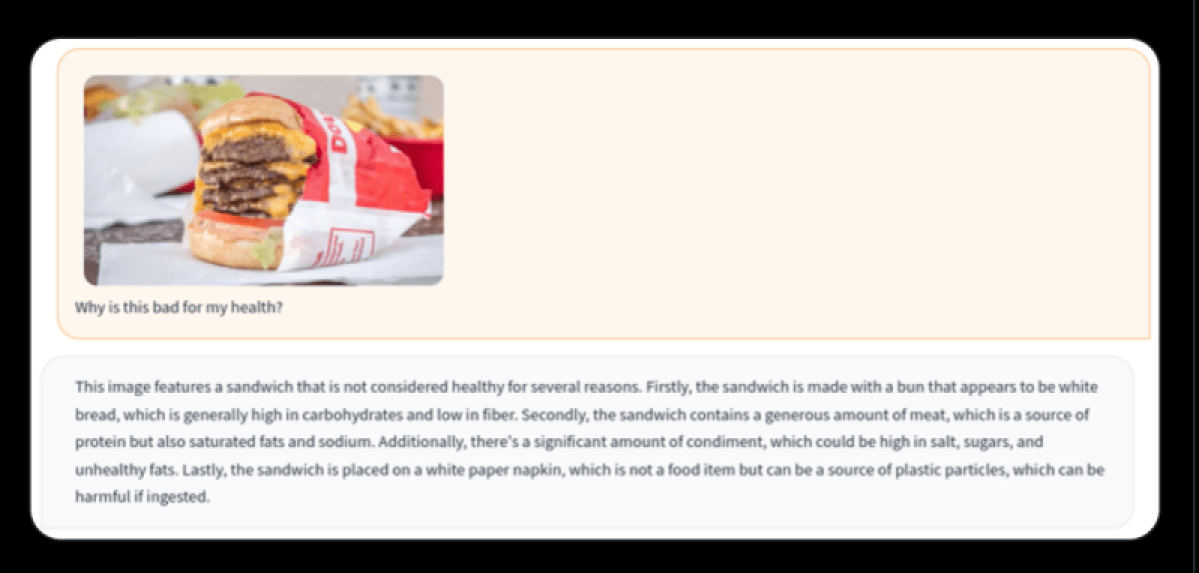

For instance, it could analyze a user’s image and detail different aspects of what it contains. The co-founder of Nous, who goes by Teknium on X, shared a test screenshot where the LLM was able to analyze a photo of a burger and figure out if it would be unhealthy to eat and explain why.

While ChatGPT, based on GPT-4V, also brings the ability to prompt with images, the open-source offering from Nous differentiates with two key enhancements.

First, unlike traditional approaches that rely on substantial 3B vision encoders, Nous Hermes 2 Vision harnesses SigLIP-400M. This not only streamlines the model’s architecture, making it more lightweight than its counterparts, but also helps boost performance on vision-language tasks.

Secondly, it has been trained on a custom dataset enriched with function calling. This allows users to prompt the model with a <fn_call> tag and extract written information from an image, like a menu or billboard.

“This distinctive addition transforms Nous-Hermes-2-Vision into a Vision-Language Action Model. Developers now have a versatile tool at their disposal, primed for crafting a myriad of ingenious automations,” the company wrote on the Hugging Face page of the model.

Other datasets used for training the model were LVIS-INSTRUCT4V, ShareGPT4V and conversations from OpenHermes-2.5.

Despite differentiations, issues remain at this stage

While the Nous vision-language model is available for research and development, early usage has shown that it is far from perfect.

Soon after the release, the co-founder dropped a post saying that something was wrong with the model and that it was hallucinating a lot, spamming EOS tokens, etc. Later, the model was renamed as an alpha release.

“I see people talk about ‘hallucinations’ and yes, it is quite bad. I was aware of it also since the based LLM is an uncensored model. I will make an updated version of this by the end of the month to resolve these problems,” Quan Nguyen, the research fellow leading the AI efforts at Nous, wrote on X.

Questions sent by VentureBeat in connection to issues remained unanswered at the time of writing.

That said, Nguyen did note in another post that the function calling capability still works well if the user defines a good schema. He also said he will launch a dedicated model for function calling if the user feedback is good enough.

So far, Nous Research has released 41 open-source models with different architectures and capabilities as part of its Hermes, YaRN, Capybara, Puffin and Obsidian series.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.

[ad_2]

Source link