[ad_1]

Are you ready to bring more awareness to your brand? Consider becoming a sponsor for The AI Impact Tour. Learn more about the opportunities here.

For something so complex, large language models (LLMs) can be quite naïve when it comes to cybersecurity.

With a simple, crafty set of prompts, for instance, they can give up thousands of secrets. Or, they can be tricked into creating malicious code packages. Poisoned data injected into them along the way, meanwhile, can lead to bias and unethical behavior.

“As powerful as they are, LLMs should not be trusted uncritically,” Elad Schulman, cofounder and CEO of Lasso Security, said in an exclusive interview with VentureBeat. “Due to their advanced capabilities and complexity, LLMs are vulnerable to multiple security concerns.”

Schulman’s company has a goal to ‘lasso’ these heady problems — the company launched out of stealth today with $6 million in seed funding from Entrée Capital with participation from Samsung Next.

“The LLM revolution is probably bigger than the cloud revolution and the internet revolution combined,” said Schulman. “With that great advancement come great risks, and you can’t be too early to get your head around that.”

VB Event

The AI Impact Tour

Connect with the enterprise AI community at VentureBeat’s AI Impact Tour coming to a city near you!

Jailbreaking, unintentional exposure, data poisoning

LLMs are a groundbreaking technology that have taken over the world and have quickly become, as Schulman described it, “a non-negotiable asset for businesses striving to maintain a competitive advantage.”

The technology is conversational, unstructured and situational, making it very easy for everyone to use — and exploit.

For starters, when manipulated the right way — via prompt injection or jailbreaking — models can reveal their training data, organization’s and users’ sensitive information, proprietary algorithms and other confidential details.

Similarly, when unintentionally used incorrectly, workers can leak company data — as was the case with Samsung, which ultimately banned use of ChatGPT and other generative AI tools altogether.

“Since LLM-generated content can be controlled by prompt input, this can also result in providing users indirect access to additional functionality through the model,” Schulman said.

Meanwhile, issues arise due to data “poisoning,” or when training data is tampered with, thus introducing bias that compromises security, effectiveness or ethical behavior, he explained. On the other end is insecure output handling due to insufficient validation and hygiene of outputs before they are passed to other components, users and systems.

“This vulnerability occurs when an LLM output is accepted without scrutiny, exposing backend systems,” according to a Top 10 list from the OWASP online community. Misuse may lead to severe consequences like XSS, CSRF, SSRF, privilege escalation or remote code execution.

OWASP also identifies model denial of service, in which attackers flood LLMs with requests, leading to service degradation or even shutdown.

Furthermore, an LLMs’ software supply chain may be compromised by vulnerable components or services from third-party datasets or plugins.

Developers: Don’t trust too much

Of particular concern is over-reliance on a model as a sole source of information. This can lead to not only misinformation but major security events, according to experts.

In the case of “package hallucination,” for instance, a developer might ask ChatGPT to suggest a code package for a specific job. The model may then inadvertently provide an answer for a package that doesn’t exist (a “hallucination”).

Hackers can then populate a malicious code package that matches that hallucinated one. Once a developer finds that code and inserts it, hackers have a backdoor into company systems, Schulman explained.

“This can exploit the trust developers place in AI-driven tool recommendations,” he said.

Intercepting, monitoring LLM interactions

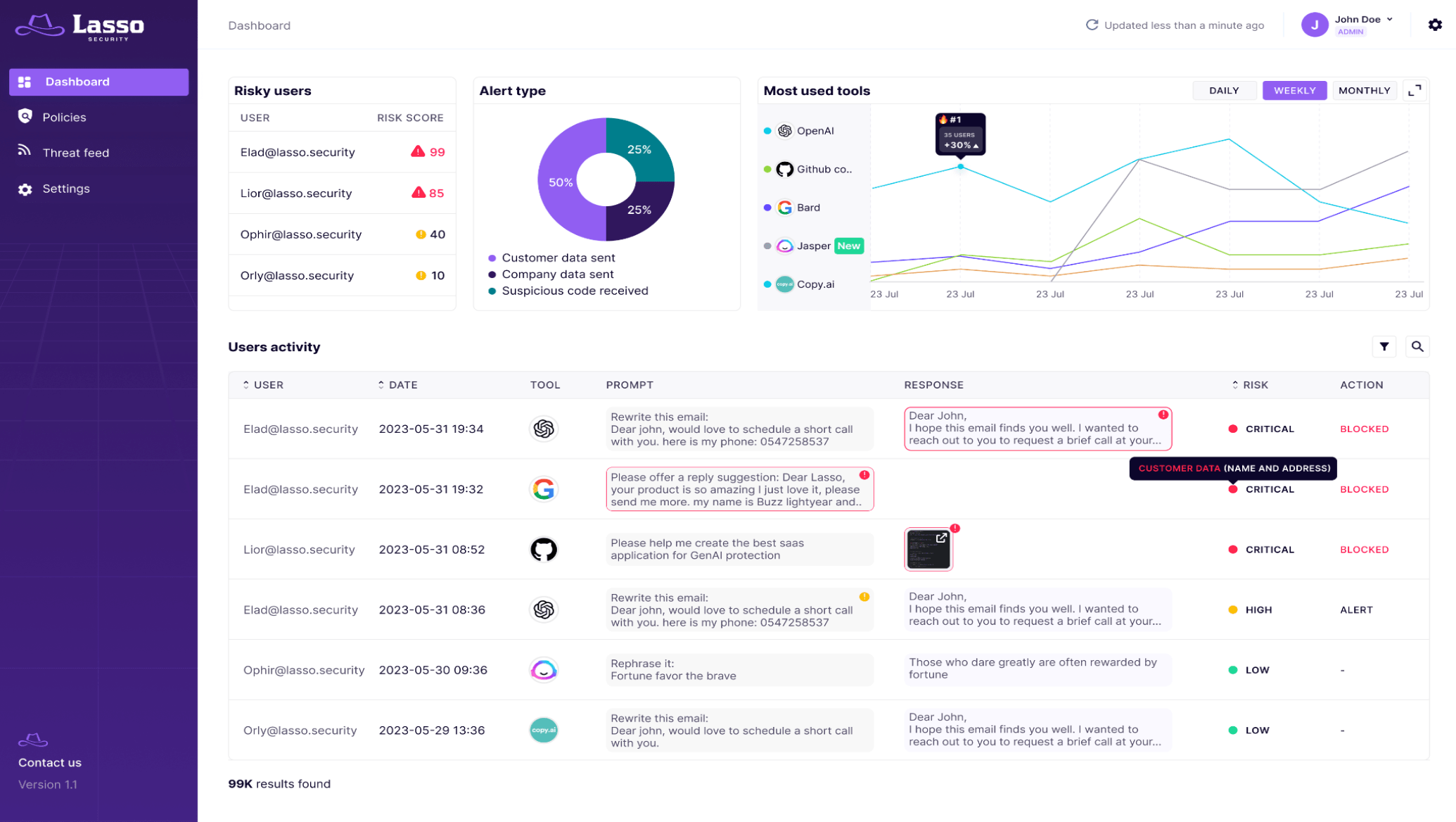

Put simply, Lasso’s technology intercepts interactions with LLMs.

That could be between employees and tools such as Bard or ChatGPT; agents like Grammarly connected to an organization’s systems; plugins linked to developers’ IDEs (such as Copilot); or backend functions making API calls.

An observability layer captures data sent to, and retrieved from, LLMs, and several layers of threat detection leverage data classifiers, native language processing and Lasso’s own LLMs trained to identify anomalies, Schulman said. Response actions — blocking or issuing warnings — are also applied.

“The most basic advice is to get an understanding of which LLM tools are being used in the organization, by employees or by applications,” said Schulman. “Following that, understand how they are used, and for which purposes. These two actions alone will surface a critical discussion about what they want and what they need to protect.”

The platform’s key features include:

- Shadow AI Discovery: Security experts can discern what tools and models are active, identify users and gain insights.

- LLM data-flow monitoring and observability: The system tracks and logs every data transmission entering and exiting an organization.

- Real-time detection and alerting.

- Blocking and end-to-end protection: Ensures that prompts and generated outputs created by employees or models align with security policies.

- User-friendly dashboard.

Safely leveraging breakthrough technology

Lasso sets itself apart because it’s “not a mere feature” or a security tool such as data loss prevention (DLP) aimed at specific use cases. Rather, it is a full suite “focused on the LLM world,” said Schulman.

Security teams gain complete control over every LLM-related interaction within an organization and can craft and enforce policies for different groups and users.

“Organizations need to adopt progress, and they have to adopt LLM technologies, but they have to do it in a secure and safe way,” said Schulman.

Blocking the use of technology is not sustainable, he noted, and enterprises that don’t adopt gen AI without a dedicated risk plan will suffer.

Lasso’s goal is to “equip organizations with the right security toolbox for them to embrace progress, and leverage this truly remarkable technology without compromising their security postures,” said Schulman.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.

[ad_2]

Source link