[ad_1]

It’s here: months after it was first announced, Nightshade, a new, free software tool allowing artists to “poison” AI models seeking to train on their works, is now available for artists to download and use on any artworks they see fit.

Developed by computer scientists on the Glaze Project at the University of Chicago under Professor Ben Zhao, the tool essentially works by turning AI against AI. It makes use of the popular open-source machine learning framework PyTorch to identify what’s in a given image, then applies a tag that subtly alters the image at the pixel level so other AI programs see something totally different than what’s actually there.

It’s the second such tool from the team: nearly one year ago, the team unveiled Glaze, a separate program designed to alter digital artwork at a user’s behest to confuse AI training algorithms into thinking the image has a different style than what is actually present (such as different colors and brush strokes than are really there).

But whereas the Chicago team designed Glaze to be a defensive tool — and still recommends artists use it in addition to Nightshade to prevent an artist’s style from being imitated by AI models — Nightshade is designed to be “an offensive tool.”

An AI model that ended up training on many images altered or “shaded” with Nightshade would likely erroneously categorize objects going forward for all users of that model, even in images that had not been shaded with Nightshade.

“For example, human eyes might see a shaded image of a cow in a green field largely unchanged, but an AI model might see a large leather purse lying in the grass,” the team further explains.

Therefore, an AI model trained on images of a cow shaded to look like a purse would start to generate purses instead of cows, even when the user asked for the model to make a picture of a cow.

Requirements and how Nightshade works

Artists seeking to use Nightshade must have a Mac with Apple chips inside (M1, M2 or M3) or a PC running Windows 10 or 11. The tool can be downloaded for both OSes here. The Windows file also is capable of running on a PC’s GPU, provided it is one from Nvidia on this list of supported hardware.

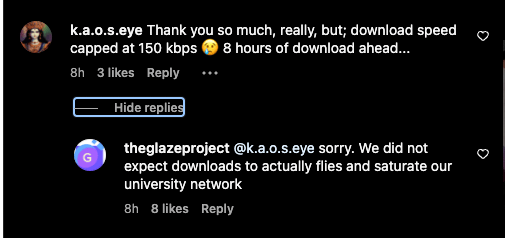

Some users have also reported long download times due to the overwhelming demand for the tool — as long as eight hours in some cases (the two versions are 255MB and 2.6GB in size for Mac and PC, respectively.

Users must also agree to the Glaze/Nightshade team’s end-user license agreement (EULA), which stipulates they use the tool on machines under their control and don’t modify the underlying source code, nor “Reproduce, copy, distribute, resell or otherwise use the Software for any commercial purpose.”

Nightshade v1.0 “transforms images into ‘poison’ samples, so that [AI] models training on them without consent will see their models learn unpredictable behaviors that deviate from expected norms, e.g. a prompt that asks for an image of a cow flying in space might instead get an image of a handbag floating in space,” states a blog post from the development team on its website.

That is, by using Nightshade v 1.0 to “shade” an image, the image will be transformed into a new version thanks to open-source AI libraries — ideally subtly enough so that it doesn’t look much different to the human eye, but that it appears to contain totally different subjects to any AI models training on it.

In addition, the tool is resilient to most of the typical transformations and alterations a user or viewer might make to an image. As the team explains:

“You can crop it, resample it, compress it, smooth out pixels, or add noise, and the effects of the poison will remain. You can take screenshots, or even photos of an image displayed on a monitor, and the shade effects remain. Again, this is because it is not a watermark or hidden message (steganography), and it is not brittle.”

Applause and condemnation

While some artists have rushed to download Nightshade v1.0 and are already making use of it — among them, Kelly McKernan, one of the former lead artist plaintiffs in the ongoing class-action copyright infringement lawsuit against AI art and video generator companies Midjourney, DeviantArt, Runway, and Stability AI — some web users have complained about it, suggesting it is tantamount to a cyberattack on AI models and companies. (VentureBeat uses Midjourney and other AI image generators to create article header artwork.)

The Glaze/Nightshade team, for its part, denies it is seeking destructive ends, writing:”Nightshade’s goal is not to break models, but to increase the cost of training on unlicensed data, such that licensing images from their creators becomes a viable alternative.”

In other words, the creators are seeking to make it so that AI model developers must pay artists to train on data from them that is uncorrupted.

The latest front in the fast-moving fight over data scraping

How did we get here? It all comes down to how AI image generators have been trained: by scraping data from across the web, including scraping original artworks posted by artists who had no prior express knowledge nor decision-making power about this practice, and say the resulting AI models trained on their works threatens their livelihood by competing with them.

As VentureBeat has reported, data scraping involves letting simple programs called “bots” scour the internet and copy and transform data from public facing websites into other formats that are helpful to the person or entity doing the scraping.

It’s been a common practice on the internet and used frequently prior to the advent of generative AI, and is roughly the same technique used by Google and Bing to crawl and index websites in search results.

But it has come under new scrutiny from artists, authors, and creatives who object to their work being used without their express permission to train commercial AI models that may compete with or replace their work product.

AI model makers defend the practice as not only necessary to train their creations, but as lawful under “fair use,” the legal doctrine in the U.S. that states prior work may be used in new work if it is transformed and used for a new purpose.

Though AI companies such as OpenAI have introduced “opt-out” code that objectors can add to their websites to avoid being scraped for AI training, the Glaze/Nightshade team notes that “Opt-out lists have been disregarded by model trainers in the past, and can be easily ignored with zero consequences. They are unverifiable and unenforceable, and those who violate opt-out lists and do-not-scrape directives can not be identified with high confidence.”

Nightshade, then, was conceived and designed as a tool to “address this power asymmetry.”

The team further explains their end goal:

“Used responsibly, Nightshade can help deter model trainers who disregard copyrights, opt-out lists, and do-not-scrape/robots.txt directives. It does not rely on the kindness of model trainers, but instead associates a small incremental price on each piece of data scraped and trained without authorization.”

Basically: make widespread data scraping more costly to AI model makers, and make them think twice about doing it, and thereby have them consider pursuing licensing agreements with human artists as a more viable alternative.

Of course, Nightshade is not able to reverse the flow of time: any artworks scraped prior to being shaded by the tool were still used to train AI models, and shading them now may impact the model’s efficacy going forward, but only if those images are re-scraped and used again to train an updated version of an AI image generator model.

There is also nothing on a technical level stopping someone from using Nightshade to shade AI-generated artwork or artwork they did not create, opening the door to potential abuses.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.

[ad_2]

Source link